Artificial intelligence (AI) systems provide promise in analyzing and evaluating power system data. There is currently a large push to use AI and machine learning (ML) to help reduce time performing maintenance on transformers and predict where and when the next transformer will fail.1,2,3 Major companies in various industries are promoting and telling the wonders of AI and ML: managing the replacement plans of an ageing or aged fleet, reduction in maintenance while extending asset life, operational efficiency — all while capturing the available expertise so it is not lost. These are lofty goals, and claims are already being made for the benefits of AI applications in the real world. The problem we face is that AI is not perfect — but it still has its role in the analysis of well-described problems with sufficient data to cover all possible situations that may be found.

Let us consider two things that are true in our industry:

• We are almost always faced with incomplete and possibly ambiguous data.

• Data analysis does not take place in a vacuum; we have a history and a knowledge base to call on to check results.

So in simple terms, if an AI system that analyzes data for power transformers is developed, then based on the data available, it should be able to replicate what has already been developed as common knowledge or industry expertise. For example, in DGA analysis, identifying increased levels of acetylene with increased probability of failure should be a rule that is identified.4 If the AI is unable to state the rule in clear terms, then we may not trust other analyses described. We have to have a believable audit trail for the analysis to justify actions.

BUSINESS ENVIRONMENT

In an ideal world, we would have complete and detailed information on every one of our transformers: maintenance history, test data, monitoring data, fault data, and so on. There would be standards and analytic tools to tell us about each individual transformer: health, probability of failure, remaining life, and so on. In practice, data may be incomplete, inconsistent, or missing.

It is common for a subject matter expert (SME) or technician to analyze and evaluate all available data to make decisions about actions and interventions in their region or area. Transformers would be ranked manually and grouped for prioritization of maintenance, replacement, or other intervention. Individual analysis methods may be used by some SMEs but not others, and they may have their own specific approaches, meaning that analysis could be inconsistent based on the region and the individual involved. So the push to more uniform approaches based on AI and ML seems both rational and sensible, especially as many experienced personnel, who understand the data, are retiring.

So what can AI and ML do for us? Some examples of benefits include:5

• In weather forecasting, AI has been used to reduce human error.

• Banks use AI in identity verification processes.

• A number of institutions use AI to support help-line requests, sometimes via chatbots.

• Siri, Cortana, and OK Google all build on AI apps.

• AI systems can classify well-organized data, such as X-rays.

On the downside, there are some issues:6

• AI may be good at interpolation within a dataset, but not at extrapolation to new data.

• “Giraffing” — the generic name for identifying the presence of objects where those objects don’t exist — may provide bias in analysis based on unrepresentative datasets.

• Using a black-box approach may make the reason for a decision not clear and transparent.

In fact, many of the benefits of AI application rely on having clean and well-ordered data. In terms of datamining, it is estimated that 95% of the possible benefits can be achieved through data clean-up and standard statistical methods.7 It is also noted that AI systems can work 24/7 and don’t get bored with repetitive tasks.

So it would seem that an appropriate approach is to apply AI tools where they are strong — analyzing data to identify the majority of standard or normal cases — and allowing the SMEs to concentrate on data that is not clear or needs real attention. Let the AI/ML interpolate but not extrapolate.

MACHINE LEARNING TYPES

In general, machine learning can be split into two similar approaches, both requiring large data sets that are split into test and training subsets:8

a) In supervised machine learning, an expert classifies the data set into different cases, for example, oil samples that indicate overheating or paper degradation. A machine learning tool tries to learn from parameters within the data — for example, hydrogen content, moisture level, presence of PD, etc.— which parameters best reflect the expert classification. Then the resulting tool is tested against new cases to see how effective it is.

b) In unsupervised machine learning, a similar approach is used, but in this case the machine learning tool groups the cases based on clusters in the many dimensions of the data provided. An expert then classifies the resulting clusters and tests against new cases.

As an example, consider an ML tool developed to recognize sheep and/or goats in pictures. In a supervised ML approach, an expert would classify each picture, and the tool would try to find data differences between the pictures that reflect the classification. We may not know why the tool does what it does — the ML can be considered a black box. Once trained, we show the ML tool more pictures for it to classify to see how well it does — and if we just show pictures used in the training data, it will likely do very well. However, when we show it more complex pictures, or pictures of another animal, the ML tool may fail.

In unsupervised ML, the tool clusters the data, and the expert classifies it afterwards. In both supervised and unsupervised ML tools, the ML performs very well when the test cases are similar to the training cases but much less well when the supplied cases are different than the training cases. What happens if there are multiple animals in a picture? Or if there is a llama — how does that get classified? The effect called “giraffing” — where an ML tool trained to identify giraffes in supplied pictures then identifies giraffes in pictures where no giraffe is present — is a result of ML training where giraffes are overrepresented in the training cases, but the cases of “no giraffes” are underrepresented.9 The effect can be seen in a visual chatbot that identifies the content of pictures, but try asking it how many giraffes are in a picture you supply.10

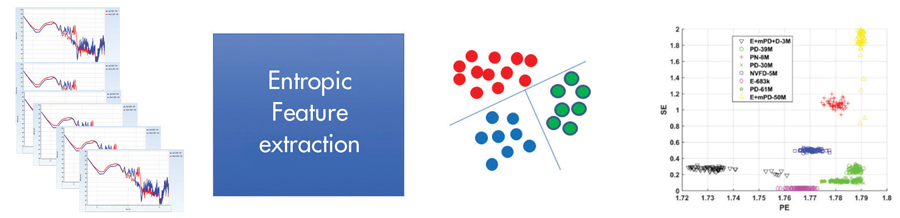

Figure 1 is a high-level view of an ML classification process for EMI spectra conducted by Dr. Imene Mitiche as part of a Doble-sponsored R&D project at Glasgow Caledonian University in the UK (Imene ref). Expert analysis of EMI spectra was initially used as a base for a supervised ML approach where features extracted from the data based on the entropy (orderliness) of the data are used to cluster the data, as shown.

Figure 1: Feature Extraction Approach to EMI Spectra Analysis

The original EMI spectra cases from a number of generator analyses taken around the world are analyzed and classified by an expert. Those classifications are then used to drive the supervised ML analysis based on the entropic features extracted. The supervised approach yielded an accuracy of subsequent test classification of approximately 75%. An unsupervised approach was also performed, using the same entropic data, with the clusters plotted on an entropy chart to indicate the cluster independence. Subsequent classification of the unsupervised clusters yielded accuracy in excess of 80%. The improvement in results from the unsupervised approach demonstrates both the difficulty in classifying the spectra and the benefits of not assuming perfect a priori knowledge from the expert. The resulting ML system is being incorporated into Doble’s EMI survey tools to support users in the field with their analyses.

Standards and guidelines are available to support many analyses, noting that these can be inconsistent and may not provide good interpretation in all cases. In practice, there is a need to focus, as there is a lot of data. For example, Duke Energy has over 10,000 large power transformers (banks > 7.5MVA) in their transformer fleet. These transformers have dozens of data sources from DGA to offline tests to maintenance history to condition monitoring, and they generate millions of individual data points. Like most companies, Duke has ever-fewer people to manage that ageing fleet, and they must be able to focus on what is most critical, most important, and most relevant.

PRACTICALITIES AT DUKE ENERGY

Duke Energy performed exhaustive research over a number of years looking for a good AI/ML tool. By “good,” we mean one that classifies cases well when they are clear, but identifies those that are less clear as needing further analysis. One thing in common to every ML solution they were offered or tried for predictive maintenance was an assumption that, given enough data, we can make accurate predictions using Gaussian modeling of the available data. Unfortunately, that assumption is not true.

Gaussian, or normal, distribution is symmetrical about an expected value. In practice, distributions of DGA values, power factor levels, PD inception voltages, and others are not Gaussian, and that trend follows through the analysis to the point of classification.

In addition, the realities for transformer data include:

• Limited and bad data

• Failure to document and maintain failed asset data

• No investment in cleaning and verifying available data

• Data not normalized across multiple sources nor within a single source

• Unique characteristics of data related to the manufacturing process for sister units (i.e. they’re handmade)

The realities for the data scientists include:

• The answer is assumed to lie in the data available, without necessarily referencing transformer SMEs.

• ML assumes a Gaussian data distribution, but most failure modes are not based on Gaussian data.

• Major companies like Dow Chemical, Audi, and Intel have been open about predictive models for major plant assets not being effective.

• IT and data scientists don’t usually understand failure modes and may not take them into account for their modeling.

Consequently, a lot of time, effort, and resources can be targeted at Ml systems that don’t support the real world. Based on experience and SME inputs, Duke Energy has developed a hybrid model that combines the best of available analysis tools and ML systems to allow SMEs and technicians to focus effectively and access data so they can make the most accurate decisions where they are needed with fewer things slipping through the cracks.

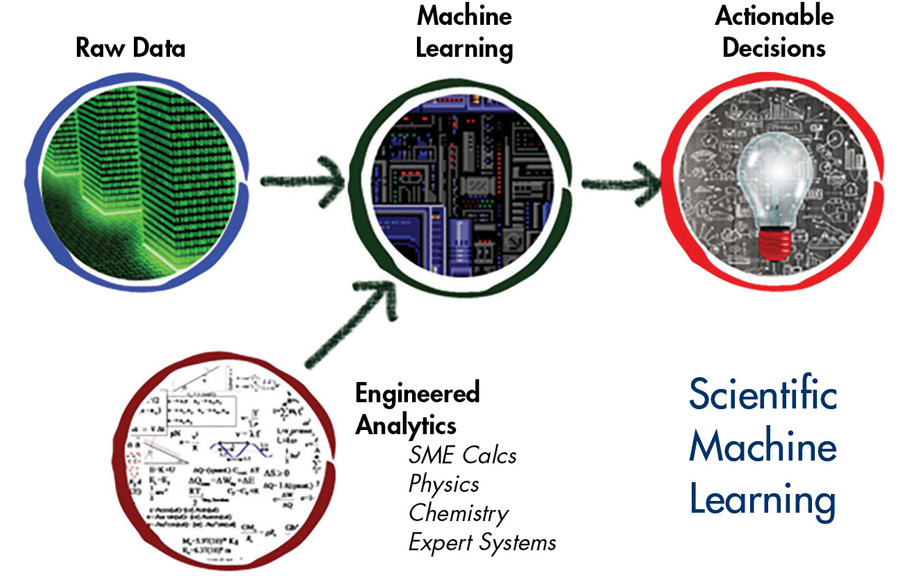

SCIENTIFIC MACHINE LEARNING

Duke’s development of a hybrid model methodology occurred at the same time as biologists and other scientific groups were developing similar techniques and finding that pure machine learning did not produce accurate results in practice. The hybrid approach is now termed ”scientific machine learning” (SciML), where actionable decisions are made based on reliable data supported by subject matter expertise.

SciML is noted for needing less data, being better at generalization, and being more interpretable and more reliable than both unsupervised and supervised machine learning.11 Duke’s use of SciML went into effect in January 2019, while the terminology and papers on the concept from academic and commercial AI/ML platforms didn’t come into common use until late 2019/2020.

SMEs are regularly asked by the asset/finance group to “provide a list of the transformers most likely to fail or in poorest condition for our proactive replacement project.” The response is regionally based, with various spreadsheets, different analyses, and different collations, as some SMEs have over 1,000 transformers to evaluate. Then a call comes in about a failed transformer that’s not on any of the supplied lists. Such failures are inevitable: Not every failure is driven by condition-related failure modes, and not every failure is predictable.

The first step in the development of a useful health and risk management (HRM) tool was to invest in data clean-up and subsequent data-hygiene management. This is an ongoing task and requires constant vigilance to prevent rogue data errors from causing false positives in analyses. Data is made available through a single-user interface, and standard engineering algorithms are applied to identify issues and data that need deeper analysis. Condition-based maintenance data (CBM), load variation, oil test, electrical test, and work order data all provide context in one interface for decision support. Analytics such as the Doble Frank scores (ref), TOA4 gassing scores/severity, and EPRI PTX indices are applied initially, and the results are normalized as a linear feature set that can be analyzed with a supervised ML tool. The combination of approaches allows data related to each transformer to be classified into one of several predefined classifications or states: Normal, Monitor, Service, Stable, Replace, and Risk Identified.

The approach is shown at a high level in Figure 2.

Figure 2: Overview of Hybrid Engineering ML Transformer Fleet Analysis Tool — SciML

The SciML tool takes the best of both worlds, applies standards/guidelines, and benefits from the broad application of ML. The process at Duke has reduced time for SMEs to perform annual fleet evaluations to a few days, rather than several weeks, in a consistent manner across the organization. The number of bad actors slipping through the cracks is lower, but not yet zero.

One of the features of the hybrid system is the ability of the system to change some states automatically:

• A state may be automatically changed to Monitor or Service based on raw data.

• The state may be changed to Risk Identified based on engineering analytics and ML classification.

• No transformer state can be automatically changed to Stable or Replace; that requires SME intervention. The SME, after reviewing the data, determines whether a transformer is Stable or should be marked Replaced, with comments recorded.

Duke Energy’s hybrid model of engineered analytics and machine learning has proven to be an excellent but imperfect tool — far more accurate than either pure AI/ML tools or engineered analytics alone. The transformer state as updated by SMEs is now far more useful in making sound planning decisions.

Success in terms of uptake and use of the hybrid model has been based on a number of activities: data hygiene, collation of data sources, application of standards and guidelines for engineered analytics, data normalization for features to feed the ML, continuous SME input, and refinement in a closed-loop evaluation.

The benefits of the hybrid approach have been to allow SMEs and field technicians to focus on important and critical cases. The system is not perfect, but it has identified bad actors more consistently and more accurately than any previous approach used at Duke Energy.

CONCLUSION

AI/ML tools can provide benefits in interpreting and classifying complex data, but they can be fooled by data that is inconsistent with their training set. The application of ML tools requires input from SMEs who can guide the development in specific applications. Understanding the raw data and making best use of data-hygiene and data-management activities is a base for building an overall analysis system that combines best practices, application of standards/guidelines, and targeted use of AI/ML systems. Doble Engineering has shown that developing targeted AI/ML tools can bring benefit in practical data analysis in the field and that applying targeted ML tools can support SMEs in their asset performance analyses.

ACKNOWLEDGEMENTS

The authors would like to thank our colleagues at Duke Energy, Doble Engineering Company, and many more across the industry who have provided comment, feedback, and discussion of the application of AI techniques. Many thanks to Dr. Mitiche at Glasgow Caledonian University for sharing her results of AI analysis of PD/EMI data.

This article was first published in Transformers Magazine, Special Edition Digitalization, November 2020, www.transformers-magazine.com.

REFERENCES

1.Gulski, Grrot, et al. “Data Mining Techniques To Assess The Condition Of High Voltage Electrical Plant,” Paper 15-107, CIGRE Technical Session, Paris, France, 2002.

2.N. N. Ravi, S. Mohd Drus, and P. S. Krishnan. “Data Mining Techniques for Transformer Failure Prediction Model: A Systematic Literature Review,” IEEE 9th Symposium on Computer Applications & Industrial Electronics, Malaysia, 2019.

3.CIGRE Technical Brochure 292. Data Mining Techniques and Applications in the Power Transmission Field, 2006.

4.CIGRE Technical Brochure 296. Recent Developments In DGA Interpretation, 2006.

5. https://towardsdatascience.com/advantages-and-disadvantages-of-artificial-intelligence-182a5ef6588c

6. https://abad1dea.tumblr.com/post/182455506350/how-math-can-be-racist-giraffing

7. https://www.kdnuggets.com/2018/04/dirty-little-secret-data-scientist.html

8. https://towardsdatascience.com/machine-learning-for-beginners-d247a9420dab

9. https://abad1dea.tumblr.com/post/182455506350/how-math-can-be-racist-giraffing

10. http://demo-visualdialog.cloudcv.org/

11. https://www.alcf.anl.gov/events/scientific-machine-learning-learning-small-data

Dr. Tony McGrail of Doble Engineering Company provides condition, criticality, and risk analysis for substation owner/operators. Previously, he spent over 10 years with National Grid in the UK and the US as a Substation Equipment Specialist, with a focus on power transformers, circuit breakers, and integrated condition monitoring. Tony also took on the role of Substation Asset Manager to identify risks and opportunities for investment in an ageing infrastructure. He is an IET Fellow, past-Chairman of the IET Council, a member of IEEE, ASTM, ISO, CIGRE, and IAM, and a contributor to SFRA and other standards.

Dr. Tony McGrail of Doble Engineering Company provides condition, criticality, and risk analysis for substation owner/operators. Previously, he spent over 10 years with National Grid in the UK and the US as a Substation Equipment Specialist, with a focus on power transformers, circuit breakers, and integrated condition monitoring. Tony also took on the role of Substation Asset Manager to identify risks and opportunities for investment in an ageing infrastructure. He is an IET Fellow, past-Chairman of the IET Council, a member of IEEE, ASTM, ISO, CIGRE, and IAM, and a contributor to SFRA and other standards.

Tom Rhodes graduated from Upper Iowa University with a BS in professional chemistry. He has over 30 years of data analysis for asset management of industrial systems. Tom worked as Implementer/Project Leader at CHAMPS Software implementing new CMMS/asset management technology, and has held titles of Sr. Science and Lab Services Specialist, Scientist, and Lead Engineering Technologist at Duke Energy. He is an author and regular presenter at Doble, IEEE, Distributec, and ARC conferences on oil analysis and asset management.

Tom Rhodes graduated from Upper Iowa University with a BS in professional chemistry. He has over 30 years of data analysis for asset management of industrial systems. Tom worked as Implementer/Project Leader at CHAMPS Software implementing new CMMS/asset management technology, and has held titles of Sr. Science and Lab Services Specialist, Scientist, and Lead Engineering Technologist at Duke Energy. He is an author and regular presenter at Doble, IEEE, Distributec, and ARC conferences on oil analysis and asset management.